Computer Peripherals and Interfacing

Computer peripherals are external devices that enhance the functionality of a computer. They include input devices like keyboards and mice, output devices like printers and monitors, and storage devices like hard disk drives and solid-state drives. Interfacing circuits connect these peripherals to t

1 views • 6 slides

Enhancing Data Reception Performance with GPU Acceleration in CCSDS 131.2-B Protocol

Explore the utilization of Graphics Processing Unit (GPU) accelerators for high-performance data reception in a Software Defined Radio (SDR) system following the CCSDS 131.2-B protocol. The research, presented at the EDHPC 2023 Conference, focuses on implementing a state-of-the-art GP-GPU receiver t

0 views • 33 slides

Understanding Parallelism in GPU Computing by Martin Kruli

This content delves into different types of parallelism in GPU computing, such as task parallelism and data parallelism, along with discussing unsuitable problems for GPUs and providing solutions like iterative kernel execution and mapping irregular structures to regular grids. The article also touc

1 views • 39 slides

Overview of GPU Architecture and Memory Systems in NVIDIA Tegra X1

Dive into the intricacies of GPU architecture and memory systems with a detailed exploration of the NVIDIA Tegra X1 die photo, instruction fetching mechanisms, SIMT core organization, cache lockup problems, and efficient memory management techniques highlighted in the provided educational materials.

7 views • 62 slides

Understanding Input and Output Devices in Computing

In computing, input and output devices play a crucial role in enabling communication between users and computers. Input devices are used to enter data into a computer, while output devices display or provide the results of processed information. Common input devices include keyboards, mice, and joys

0 views • 17 slides

Parallel Implementation of Multivariate Empirical Mode Decomposition on GPU

Empirical Mode Decomposition (EMD) is a signal processing technique used for separating different oscillation modes in a time series signal. This paper explores the parallel implementation of Multivariate Empirical Mode Decomposition (MEMD) on GPU, discussing numerical steps, implementation details,

1 views • 15 slides

Exploring GPU Parallelization for 2D Convolution Optimization

Our project focuses on enhancing the efficiency of 2D convolutions by implementing parallelization with GPUs. We delve into the significance of convolutions, strategies for parallelization, challenges faced, and the outcomes achieved. Through comparing direct convolution to Fast Fourier Transform (F

0 views • 29 slides

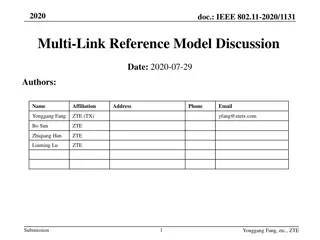

IEEE 802.11-2020 Multi-Link Reference Model Discussion

This contribution discusses the reference model to support multi-link operation in IEEE 802.11be and proposes architecture reference models to support multi-link devices. It covers aspects such as baseline architecture reference models, logical entities in different layers, Multi-Link Device (MLD) f

1 views • 19 slides

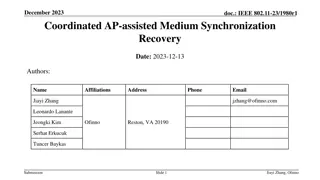

IEEE 802.11-23/1980r1 Coordinated AP-assisted Medium Synchronization Recovery

This document from December 2023 discusses medium synchronization recovery leveraging multi-AP coordination for multi-link devices. It covers features such as Multi-link device (MLD), Multi-link operation (MLO), and Ultra High Reliability (UHR) capability defined in P802.11bn for improvements in rat

0 views • 8 slides

GPU Scheduling Strategies: Maximizing Performance with Cache-Conscious Wavefront Scheduling

Explore GPU scheduling strategies including Loose Round Robin (LRR) for maximizing performance by efficiently managing warps, Cache-Conscious Wavefront Scheduling for improved cache utilization, and Greedy-then-oldest (GTO) scheduling to enhance cache locality. Learn how these techniques optimize GP

0 views • 21 slides

Understanding Modern GPU Computing: A Historical Overview

Delve into the fascinating history of Graphic Processing Units (GPUs), from the era of CPU-dominated graphics computation to the introduction of 3D accelerator cards, and the evolution of GPU architectures like NVIDIA Volta-based GV100. Explore the peak performance comparison between CPUs and GPUs,

5 views • 20 slides

Efforts to Enable VFIO for RDMA and GPU Memory Access

Efforts are underway to enable VFIO for RDMA and GPU memory access through the creation and insertion of DEVICE_PCI_P2PDMA pages. This involves utilizing functions like hmm_range_fault and collaborating with companies like Mellanox, Nvidia, and RedHat to support non-ODP, pinned page mappings for imp

0 views • 16 slides

Redesigning the GPU Memory Hierarchy for Multi-Application Concurrency

This presentation delves into the innovative reimagining of GPU memory hierarchy to accommodate multiple applications concurrently. It explores the challenges of GPU sharing with address translation, high-latency page walks, and inefficient caching, offering insights into a translation-aware memory

1 views • 15 slides

Understanding GPU Rasterization and Graphics Pipeline

Delve into the world of GPU rasterization, from the history of GPUs and software rasterization to the intricacies of the Quake Engine, graphics pipeline, homogeneous coordinates, affine transformations, projection matrices, and lighting calculations. Explore concepts such as backface culling and dif

0 views • 17 slides

Improving GPGPU Performance with Cooperative Thread Array Scheduling Techniques

Limited DRAM bandwidth poses a critical bottleneck in GPU performance, necessitating a comprehensive scheduling policy to reduce cache miss rates, enhance DRAM bandwidth, and improve latency hiding for GPUs. The CTA-aware scheduling techniques presented address these challenges by optimizing resourc

0 views • 33 slides

Overview of Computer Input and Output Devices

Input devices of a computer system consist of external components like keyboard, mouse, light pen, joystick, scanner, microphone, and more, that provide information and instructions to the computer. On the other hand, output devices transfer information from the computer's CPU to the user through de

0 views • 11 slides

GPU-Accelerated Delaunay Refinement: Efficient Triangulation Algorithm

This study presents a novel approach for computing Delaunay refinement using GPU acceleration. The algorithm aims to generate a constrained Delaunay triangulation from a planar straight line graph efficiently, with improvements in termination handling and Steiner point management. By leveraging GPU

0 views • 23 slides

PipeSwitch: Fast Context Switching for Deep Learning Applications

PipeSwitch introduces fast pipelined context switching for deep learning applications, aiming to enable GPU-efficient multiplexing of multiple DL tasks with fine-grained time-sharing. The goal is to achieve millisecond-scale context switching overhead and high throughput, addressing the challenges o

1 views • 38 slides

vFireLib: Forest Fire Simulation Library on GPU

Dive into Jessica Smith's thesis defense on vFireLib, a forest fire simulation library implemented on the GPU. The research focuses on real-time GPU-based wildfire simulation for effective and safe wildfire suppression efforts, aiming to reduce costs and mitigate loss of habitat, property, and life.

0 views • 95 slides

Understanding GPU Programming Models and Execution Architecture

Explore the world of GPU programming with insights into GPU architecture, programming models, and execution models. Discover the evolution of GPUs and their importance in graphics engines and high-performance computing, as discussed by experts from the University of Michigan.

0 views • 28 slides

Microarchitectural Performance Characterization of Irregular GPU Kernels

GPUs are widely used for high-performance computing, but irregular algorithms pose challenges for parallelization. This study delves into the microarchitectural aspects affecting GPU performance, emphasizing best practices to optimize irregular GPU kernels. The impact of branch divergence, memory co

0 views • 26 slides

Advanced GPU Performance Modeling Techniques

Explore cutting-edge techniques in GPU performance modeling, including interval analysis, resource contention identification, detailed timing simulation, and balancing accuracy with efficiency. Learn how to leverage both functional simulation and analytical modeling to pinpoint performance bottlenec

0 views • 32 slides

Performance Aspects of Multi-link Operations in IEEE 802.11-19/1291r0

This document explores the performance aspects, benefits, and assumptions of multi-link operations in IEEE 802.11-19/1291r0. It discusses the motivation for multi-link operation in new wireless devices, potential throughput gains, classification of multi-link capabilities, and operation modes. The s

0 views • 30 slides

Multi-Stage, Multi-Resolution Beamforming Training for IEEE 802.11ay

In September 2016, a proposal was introduced to enhance the beamforming training procedures in IEEE 802.11ay for increased efficiency and MIMO support. The proposal suggests a multi-stage, multi-resolution beamforming training framework to improve efficiency in scenarios with high-resolution beams a

0 views • 11 slides

Communication Costs in Distributed Sparse Tensor Factorization on Multi-GPU Systems

This research paper presented an evaluation of communication costs for distributed sparse tensor factorization on multi-GPU systems. It discussed the background of tensors, tensor factorization methods like CP-ALS, and communication requirements in RefacTo. The motivation highlighted the dominance o

0 views • 34 slides

GPU Acceleration in ITK v4 Overview

This presentation by Won-Ki Jeong from Harvard University at the ITK v4 winter meeting in 2011 discusses the implementation and advantages of GPU acceleration in ITK v4. Topics covered include the use of GPUs as co-processors for massively parallel processing, memory and process management, new GPU

0 views • 33 slides

Understanding GPU-Accelerated Fast Fourier Transform

Today's lecture delves into the realm of GPU-accelerated Fast Fourier Transform (cuFFT), exploring the frequency content present in signals, Discrete Fourier Transform (DFT) formulations, roots of unity, and an alternative approach for DFT calculation. The lecture showcases the efficiency of GPU-bas

0 views • 40 slides

Wireless Office Docking Model for Multiple Devices

This document outlines a usage model for office docking involving wireless connections between mobile devices and various peripheral devices such as monitors, hard drives, printers, and more. It describes scenarios for single and multiple devices in both home and office settings, emphasizing the nee

0 views • 5 slides

Understanding I/O Systems and Devices

I/O systems and devices play a crucial role in computer operations. They can be categorized into block devices and character devices based on their functionalities. Block devices store information in fixed-size blocks with addresses, while character devices handle character streams. Some devices, li

0 views • 19 slides

GPU Computing and Synchronization Techniques

Synchronization in GPU computing is crucial for managing shared resources and coordinating parallel tasks efficiently. Techniques such as __syncthreads() and atomic instructions help ensure data integrity and avoid race conditions in parallel algorithms. Examples requiring synchronization include Pa

0 views • 22 slides

Understanding GPU Performance for NFA Processing

Hongyuan Liu, Sreepathi Pai, and Adwait Jog delve into the challenges of GPU performance when executing NFAs. They address data movement and utilization issues, proposing solutions and discussing the efficiency of processing large-scale NFAs on GPUs. The research explores architectures and paralleli

0 views • 25 slides

Energy-Efficient Query Processing on Embedded CPU-GPU Architectures

This study explores the energy efficiency of query processing on embedded CPU-GPU architectures, focusing on the utilization of embedded GPUs and the potential for co-processing with CPUs. The research evaluates the performance and power consumption of different processing approaches, considering th

0 views • 22 slides

Maximizing GPU Throughput with HTCondor in 2023

Explore the integration of GPUs with HTCondor for efficient throughput computing in 2023. Learn how to enable GPUs on execution platforms, request GPUs for jobs, and configure job environments. Discover key considerations for jobs with specific GPU requirements and how to allocate GPUs effectively.

0 views • 22 slides

ZMCintegral: Python Package for Monte Carlo Integration on Multi-GPU Devices

ZMCintegral is an easy-to-use Python package designed for Monte Carlo integration on multi-GPU devices. It offers features such as random sampling within a domain, adaptive importance sampling using methods like Vegas, and leveraging TensorFlow-GPU backend for efficient computation. The package prov

0 views • 7 slides

GPU Acceleration in ITK v4: Overview and Implementation

This presentation discusses the implementation of GPU acceleration in ITK v4, focusing on providing a high-level GPU abstraction, transparent resource management, code development status, and GPU core classes. Goals include speeding up certain types of problems and managing memory effectively.

0 views • 32 slides

Efficient Parallelization Techniques for GPU Ray Tracing

Dive into the world of real-time ray tracing with part 2 of this series, focusing on parallelizing your ray tracer for optimal performance. Explore the essentials needed before GPU ray tracing, handle materials, textures, and mesh files efficiently, and understand the complexities of rendering trian

0 views • 159 slides

IEEE 802.11-17: Enhancing Multi-Link Operation for Higher Throughput

The document discusses IEEE 802.11-17/xxxxr0 focusing on multi-link operation for achieving higher throughput. It covers motions adopted in the SFD related to asynchronous multi-link channel access, mechanisms for multi-link operation, and shared sequence number space. Additionally, it explores the

0 views • 14 slides

Overview of DICOM WG21 Multi-Energy Imaging Supplement

The DICOM WG21 Multi-Energy Imaging Supplement aims to address the challenges and opportunities in multi-energy imaging technologies, providing a comprehensive overview of imaging techniques, use cases, objectives, and potential clinical applications. The supplement discusses the definition of multi

0 views • 33 slides

Synchronization and Shared Memory in GPU Computing

Synchronization and shared memory play vital roles in optimizing parallelism in GPU computing. __syncthreads() enables thread synchronization within blocks, while atomic instructions ensure serialized access to shared resources. Examples like Parallel BFS and summing numbers highlight the need for s

0 views • 21 slides

Fast Noncontiguous GPU Data Movement in Hybrid MPI+GPU Environments

This research focuses on enabling efficient and fast noncontiguous data movement between GPUs in hybrid MPI+GPU environments. The study explores techniques such as MPI-derived data types to facilitate noncontiguous message passing and improve communication performance in GPU-accelerated systems. By

0 views • 18 slides