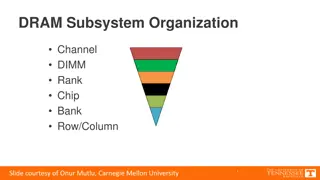

Understanding the Organization of DRAM Subsystem Components

Explore the intricate structure of the DRAM subsystem, including memory channels, DIMMs, ranks, chips, banks, and rows/columns. Delve into the breakdown of DIMMs, ranks, chips, and banks to comprehend the design and functioning of DRAM memory systems. Gain insights into address decoding, row/column

0 views • 16 slides

Understanding Cache and Virtual Memory in Computer Systems

A computer's memory system is crucial for ensuring fast and uninterrupted access to data by the processor. This system comprises internal processor memories, primary memory, and secondary memory such as hard drives. The utilization of cache memory helps bridge the speed gap between the CPU and main

2 views • 47 slides

Computer Architecture: Understanding SRAM and DRAM Memory Technologies

In the field of computer architecture, SRAM and DRAM are two prevalent memory technologies with distinct characteristics. SRAM retains data as long as power is present, while DRAM is dynamic and requires data refreshing. SRAM is built with high-speed CMOS technology, whereas DRAM is more dense and b

3 views • 38 slides

Understanding Shared Memory Architectures and Cache Coherence

Shared memory architectures involve multiple CPUs sharing one memory with a global address space, with challenges like the cache coherence problem. This summary delves into UMA and NUMA architectures, addressing issues like memory latency and bandwidth, as well as the bus-based UMA and NUMA shared m

0 views • 27 slides

Understanding Cache Memory in Computer Architecture

Cache memory is a crucial component in computer architecture that aims to accelerate memory accesses by storing frequently used data closer to the CPU. This faster access is achieved through SRAM-based cache, which offers much shorter cycle times compared to DRAM. Various cache mapping schemes are e

2 views • 20 slides

High-Throughput True Random Number Generation Using QUAC-TRNG

DRAM-based QUAC-TRNG provides high-throughput and low-latency true random number generation by utilizing commodity DRAM devices. By employing Quadruple Row Activation (QUAC), this method outperforms existing TRNGs, achieving a 15.08x improvement in throughput and passing all 15 NIST randomness tests

0 views • 10 slides

SIMDRAM: An End-to-End Framework for Bit-Serial SIMD Processing Using DRAM

SIMDRAM introduces a novel framework for efficient computation in DRAM, aiming to overcome data movement bottlenecks. It emphasizes Processing-in-Memory (PIM) and Processing-using-Memory (PuM) paradigms to enhance processing capabilities within DRAM while minimizing architectural changes. The motiva

2 views • 14 slides

GPU Scheduling Strategies: Maximizing Performance with Cache-Conscious Wavefront Scheduling

Explore GPU scheduling strategies including Loose Round Robin (LRR) for maximizing performance by efficiently managing warps, Cache-Conscious Wavefront Scheduling for improved cache utilization, and Greedy-then-oldest (GTO) scheduling to enhance cache locality. Learn how these techniques optimize GP

0 views • 21 slides

Understanding Shared Memory Architectures and Cache Coherence

Shared memory architectures involve multiple CPUs accessing a common memory, leading to challenges like the cache coherence problem. This article delves into different types of shared memory architectures, such as UMA and NUMA, and explores the cache coherence issue and protocols. It also highlights

2 views • 27 slides

Insights into DRAM Power Consumption and Design Concerns

Detailed experimental study reveals that DRAM power models may not provide accurate insights into power consumption. The increasing importance of managing DRAM power in system design is emphasized. The study delves into DRAM organization, operation, and power consumption patterns, highlighting the n

0 views • 43 slides

Improving GPGPU Performance with Cooperative Thread Array Scheduling Techniques

Limited DRAM bandwidth poses a critical bottleneck in GPU performance, necessitating a comprehensive scheduling policy to reduce cache miss rates, enhance DRAM bandwidth, and improve latency hiding for GPUs. The CTA-aware scheduling techniques presented address these challenges by optimizing resourc

0 views • 33 slides

Enhancing Multi-Node Systems with Coherent DRAM Caches

Exploring the integration of Coherent DRAM Caches in multi-node systems to improve memory performance. Discusses the benefits, challenges, and potential performance improvements compared to existing memory-side cache solutions.

0 views • 28 slides

Enhancing Memory Cache Efficiency with DRAM Compression Techniques

Explore the challenges faced by Moore's Law in relation to bandwidth limitations and the innovative solutions such as 3D-DRAM caches and compressed memory systems. Discover how compressing DRAM caches can improve bandwidth and capacity, leading to enhanced performance in memory-intensive application

0 views • 48 slides

Mitigating Conflict-Based Attacks in Modern Systems

CEASER presents a solution to protect Last-Level Cache (LLC) from conflict-based cache attacks using encrypted address space and remapping techniques. By avoiding traditional table-based randomization and instead employing encryption for cache mapping, CEASER aims to provide enhanced security with n

1 views • 21 slides

Amoeba Cache: Adaptive Blocks for Memory Hierarchy Optimization

The Amoeba Cache introduces adaptive blocks to optimize memory hierarchy utilization, eliminating waste by dynamically adjusting storage allocations. Factors influencing cache efficiency and application-specific behaviors are explored. Images and data distributions illustrate the effectiveness of th

0 views • 57 slides

Understanding Cache Memory Designs: Set vs Fully Associative Cache

Exploring the concepts of cache memory designs through Aaron Tan's NUS Lecture #23. Covering topics such as types of cache misses, block size trade-off, set associative cache, fully associative cache, block replacement policy, and more. Dive into the nuances of cache memory optimization and understa

0 views • 42 slides

Architecting DRAM Caches for Low Latency and High Bandwidth

Addressing fundamental latency trade-offs in designing DRAM caches involves considerations such as memory stacking for improved latency and bandwidth, organizing large caches at cache-line granularity to minimize wasted space, and optimizing cache designs to reduce access latency. Challenges include

0 views • 32 slides

Understanding RowPress: A New Read Disturbance Phenomenon in Modern DRAM Chips

Demonstrating and analyzing RowPress, a novel read disturbance phenomenon causing bitflips in DRAM chips. Different from RowHammer vulnerability, RowPress showcases effective solutions on real Intel systems with DRAM chips.

0 views • 46 slides

Panopticon: Complete In-DRAM Rowhammer Mitigation

Despite extensive research, DRAM remains vulnerable to Rowhammer attacks. The Panopticon project proposes a novel in-DRAM mitigation technique using counter mats within DRAM devices. This approach does not require costly changes at multiple layers and leverages existing DRAM logic for efficient miti

0 views • 17 slides

Understanding Cache Memory Organization in Computer Systems

Exploring concepts such as set-associative cache, direct-mapped cache, fully-associative cache, and replacement policies in cache memory design. Delve into topics like generality of set-associative caches, block mapping in different cache architectures, hit rates, conflicts, and eviction strategies.

0 views • 35 slides

Adaptive Insertion Policies for High-Performance Caching

Explore the concept of adaptive insertion policies in high-performance caching systems, focusing on mitigating the issue of Dead on Arrival (DoA) lines by making simple changes to cache insertion policies. Understanding cache replacement components, victim selection, and insertion policy can signifi

0 views • 15 slides

Understanding DRAM Errors: Implications for System Design

Exploring the nature of DRAM errors, this study delves into the causes, types, and implications for system design. From soft errors caused by cosmic rays to hard errors due to permanent hardware issues, the research examines error protection mechanisms and open questions surrounding DRAM errors. Pre

0 views • 31 slides

Efficient Handling of Cache Miss Rate in FPGAs

This study focuses on improving cache miss rate efficiency in FPGAs through the implementation of non-blocking caches and efficient Miss Status Holding Registers (MSHRs). By tracking more outstanding misses and utilizing memory-level parallelism, this approach proves to be more cost-effective than s

0 views • 44 slides

Defending Against Cache-Based Side-Channel Attacks

The content discusses strategies to mitigate cache-based side-channel attacks, focusing on the importance of constant-time programming to avoid timing vulnerabilities. It covers topics such as microarchitectural attacks, cache structure, Prime+Probe attack, and the Bernstein attack on AES. Through d

0 views • 25 slides

Understanding Latency Variation in Modern DRAM Chips

This research delves into the complexities of latency variation in modern DRAM chips, highlighting factors such as imperfect manufacturing processes and high standard latencies chosen to boost yield. The study aims to characterize latency variation, optimize DRAM performance, and develop mechanisms

0 views • 37 slides

Efficient Cache Management using The Dirty-Block Index

The Dirty-Block Index (DBI) is a solution to address inefficiencies in caches by removing dirty bits from cache tag stores, improving query response efficiency, and enabling various optimizations like DRAM-aware writeback. Its implementation leads to significant performance gains and cache area redu

0 views • 44 slides

Improving Cache Performance Through Read-Write Disparity

This study explores how exploiting the difference between read and write requests can enhance cache performance by prioritizing read over write operations. By dynamically partitioning the cache and protecting lines with more read hits, the proposed method demonstrates significant performance improve

0 views • 27 slides

Understanding Cache Memory in Computer Systems

Explore the intricate world of cache memory in computer systems through detailed explanations of how it functions, its types, and its role in enhancing system performance. Delve into the nuances of associative memory, valid and dirty bits, as well as fully associative examples to grasp the complexit

0 views • 15 slides

Understanding Power Consumption in Memory-Intensive Databases

This collection of research delves into the power challenges faced by memory-intensive databases (MMDBs) and explores strategies for reducing DRAM power draw. Topics covered include the impact of hardware features on power consumption, experimental setups for analyzing power breakdown, and the effec

0 views • 13 slides

Understanding Cache Coherency and Multi-Core Programming

Explore the intricate world of cache coherency and multi-core programming through images and descriptions covering topics such as how cache shares data between cores, maintaining data consistency, CPU architecture, memory caching, MESI protocol, and interconnect bus communication.

0 views • 97 slides

Understanding Cache Coherence in Computer Architecture

Exploring the concept of cache coherence in computer architecture, this content delves into the challenges and solutions associated with maintaining consistency among multiple caches in modern systems. It discusses the importance of coherence in shared memory systems and the use of cache-coherent me

0 views • 24 slides

Enhancing DRAM Performance with ChargeCache: A Novel Approach

Reduce average DRAM access latency by leveraging row access locality with ChargeCache, a cost-effective solution requiring no modifications to existing DRAM chips. By tracking recently accessed rows and adjusting timing parameters, ChargeCache achieves higher performance and lower DRAM energy consum

0 views • 33 slides

Intelligent DRAM Cache Strategies for Bandwidth Optimization

Efficiently managing DRAM caches is crucial due to increasing memory demands and bandwidth limitations. Strategies like using DRAM as a cache, architectural considerations for large DRAM caches, and understanding replacement policies are explored in this study to enhance memory bandwidth and capacit

0 views • 23 slides

Enhancing Data Movement Efficiency in DRAM with Low-Cost Inter-Linked Subarrays (LISA)

This research focuses on improving bulk data movement efficiency within DRAM by introducing Low-Cost Inter-Linked Subarrays (LISA). By providing wide connectivity between subarrays, LISA enables fast inter-subarray data transfers, reducing latency and energy consumption. Key applications include fas

0 views • 49 slides

CLR-DRAM: Dynamic Capacity-Latency Trade-off Architecture

CLR-DRAM introduces a low-cost DRAM architecture that enables dynamic configuration for high capacity or low latency at the granularity of a row. By allowing a single DRAM row to switch between max-capacity and high-performance modes, it reduces key timing parameters, improves system performance, an

0 views • 42 slides

Cache Replacement Policies and Enhancements in Fall 2023 Lecture 8 by Brandon Lucia

The Fall 2023 Lecture 8 by Brandon Lucia delves into cache replacement policies and enhancements for efficient memory management. The session covers the intricacies of replacement policies such as Round Robin, discussing evictions and block prioritization within cache sets. Visual aids and examples

0 views • 60 slides

Locality-Aware Caching Policies for Hybrid Memories

Different memory technologies present unique strengths, and a hybrid memory system combining DRAM and PCM aims to leverage the best of both worlds. This research explores the challenge of data placement between these diverse memory devices, highlighting the use of row buffer locality as a key criter

1 views • 34 slides

Maximizing Cache Hit Rate with LHD: An Overview

This presentation discusses the concept of Least Hit Density (LHD) for improving cache hit rates, focusing on the challenges and benefits of key-value caches in maximizing performance through efficient eviction policies like LRU. It emphasizes the importance of cache hit rates in enhancing web appli

0 views • 40 slides

Cache Replacement Policies in Distributed Systems: Key Considerations and Challenges

Explore the critical aspects of cache replacement policies in distributed systems, including cache consistency, update propagation, eviction strategies, and working sets. Dive into the implications of different policies like LRU and discover why certain access patterns may not be efficiently handled

0 views • 22 slides

Understanding the Impact of On-Die ECC on DRAM Error Characteristics

The BEER project explores how on-die ECC complicates DRAM reliability studies by concealing error characteristics. It aims to uncover the unique ECC function of DRAM chips and infer error locations in error-prone cells. The study highlights the challenges in identifying and correcting bit flips obfu

0 views • 17 slides