Understanding Memory Allocation in Operating Systems

Memory allocation in operating systems involves fair distribution of physical memory among running processes. The memory management subsystem ensures each process gets its fair share. Shared virtual memory and the efficient use of resources like dynamic libraries contribute to better memory utilizat

1 views • 233 slides

Understanding Memory Organization in Computers

The memory unit is crucial in any digital computer for storing programs and data. It comprises main memory, auxiliary memory, and cache memory, each serving different roles in data storage and retrieval. Main memory directly communicates with the CPU, while cache memory enhances processing speed by

1 views • 37 slides

Understanding Memory Organization in Computers

Delve into the intricate world of memory organization within computer systems, exploring the vital role of memory units, cache memory, main memory, auxiliary memory, and the memory hierarchy. Learn about the different types of memory, such as sequential access memory and random access memory, and ho

0 views • 45 slides

Understanding Computer Memory Systems and Organization

Memory systems in computer architecture play a crucial role in data storage and processing. They vary in purpose, performance, and capacity, from temporary storage like RAM to permanent storage. The main goal of a memory system is to ensure fast and uninterrupted access for the processor to enhance

0 views • 33 slides

Understanding Cache and Virtual Memory in Computer Systems

A computer's memory system is crucial for ensuring fast and uninterrupted access to data by the processor. This system comprises internal processor memories, primary memory, and secondary memory such as hard drives. The utilization of cache memory helps bridge the speed gap between the CPU and main

1 views • 47 slides

Dynamic Memory Allocation in Computer Systems: An Overview

Dynamic memory allocation in computer systems involves the acquisition of virtual memory at runtime for data structures whose size is only known at runtime. This process is managed by dynamic memory allocators, such as malloc, to handle memory invisible to user code, application kernels, and virtual

0 views • 70 slides

Understanding Shared Memory Architectures and Cache Coherence

Shared memory architectures involve multiple CPUs sharing one memory with a global address space, with challenges like the cache coherence problem. This summary delves into UMA and NUMA architectures, addressing issues like memory latency and bandwidth, as well as the bus-based UMA and NUMA shared m

0 views • 27 slides

Understanding Cache Memory in Computer Architecture

Cache memory is a crucial component in computer architecture that aims to accelerate memory accesses by storing frequently used data closer to the CPU. This faster access is achieved through SRAM-based cache, which offers much shorter cycle times compared to DRAM. Various cache mapping schemes are e

2 views • 20 slides

Understanding Memory Management in Operating Systems

Dive into the world of memory management in operating systems, covering topics such as virtual memory, page replacement algorithms, memory allocation, and more. Explore concepts like memory partitions, fixed partitions, memory allocation mechanisms, base and limit registers, and the trade-offs betwe

1 views • 110 slides

GPU Scheduling Strategies: Maximizing Performance with Cache-Conscious Wavefront Scheduling

Explore GPU scheduling strategies including Loose Round Robin (LRR) for maximizing performance by efficiently managing warps, Cache-Conscious Wavefront Scheduling for improved cache utilization, and Greedy-then-oldest (GTO) scheduling to enhance cache locality. Learn how these techniques optimize GP

0 views • 21 slides

Understanding Shared Memory Architectures and Cache Coherence

Shared memory architectures involve multiple CPUs accessing a common memory, leading to challenges like the cache coherence problem. This article delves into different types of shared memory architectures, such as UMA and NUMA, and explores the cache coherence issue and protocols. It also highlights

2 views • 27 slides

Understanding Memory Management and Swapping Techniques

Memory management involves techniques like swapping, memory allocation changes, memory compaction, and memory management with bitmaps. Swapping refers to bringing each process into memory entirely, running it for a while, then putting it back on the disk. Memory allocation can change as processes en

0 views • 17 slides

Understanding Memory Encoding and Retention Processes

Memory is the persistence of learning over time, involving encoding, storage, and retrieval of information. Measures of memory retention include recall, recognition, and relearning. Ebbinghaus' retention curve illustrates the relationship between practice and relearning. Psychologists use memory mod

0 views • 22 slides

Amoeba Cache: Adaptive Blocks for Memory Hierarchy Optimization

The Amoeba Cache introduces adaptive blocks to optimize memory hierarchy utilization, eliminating waste by dynamically adjusting storage allocations. Factors influencing cache efficiency and application-specific behaviors are explored. Images and data distributions illustrate the effectiveness of th

0 views • 57 slides

Understanding Memory Management in Computer Systems

Memory management in computer systems involves optimizing CPU utilization, managing data in memory before and after processing, allocating memory space efficiently, and keeping track of memory usage. It determines what is in memory, moves data in and out as needed, and involves caching at various le

1 views • 21 slides

Understanding Cache Memory Designs: Set vs Fully Associative Cache

Exploring the concepts of cache memory designs through Aaron Tan's NUS Lecture #23. Covering topics such as types of cache misses, block size trade-off, set associative cache, fully associative cache, block replacement policy, and more. Dive into the nuances of cache memory optimization and understa

0 views • 42 slides

Dynamic Memory Management Overview

Understanding dynamic memory management is crucial in programming to efficiently allocate and deallocate memory during runtime. The memory is divided into the stack and the heap, each serving specific purposes in storing local and dynamic data. Dynamic memory allocators organize the heap for efficie

0 views • 31 slides

Architecting DRAM Caches for Low Latency and High Bandwidth

Addressing fundamental latency trade-offs in designing DRAM caches involves considerations such as memory stacking for improved latency and bandwidth, organizing large caches at cache-line granularity to minimize wasted space, and optimizing cache designs to reduce access latency. Challenges include

0 views • 32 slides

Understanding Cache Memory Organization in Computer Systems

Exploring concepts such as set-associative cache, direct-mapped cache, fully-associative cache, and replacement policies in cache memory design. Delve into topics like generality of set-associative caches, block mapping in different cache architectures, hit rates, conflicts, and eviction strategies.

0 views • 35 slides

Adaptive Insertion Policies for High-Performance Caching

Explore the concept of adaptive insertion policies in high-performance caching systems, focusing on mitigating the issue of Dead on Arrival (DoA) lines by making simple changes to cache insertion policies. Understanding cache replacement components, victim selection, and insertion policy can signifi

0 views • 15 slides

Understanding Memory Basics in Digital Systems

Dive into the world of digital memory systems with a focus on Random Access Memory (RAM), memory capacities, SI prefixes, logical models of memory, and example memory symbols. Learn about word sizes, addresses, data transfer, and capacity calculations to gain a comprehensive understanding of memory

1 views • 12 slides

Understanding Cache Memory in Computer Systems

Explore the intricate world of cache memory in computer systems through detailed explanations of how it functions, its types, and its role in enhancing system performance. Delve into the nuances of associative memory, valid and dirty bits, as well as fully associative examples to grasp the complexit

0 views • 15 slides

Understanding Caching and Virtual Memory Concepts

Exploring the fundamental concepts of caching and demand-paged virtual memory in computer systems. Topics covered include cache definitions, memory hierarchy, cache concepts for reading and writing, main points on memory management techniques, hardware address translation, demand paging process, and

1 views • 46 slides

Understanding Different Types of Memory Technologies in Computer Systems

Explore the realm of memory technologies with an overview of ROM, RAM, non-volatile memories, and programmable memory options. Delve into the intricacies of read-only memory, volatile vs. non-volatile memory, and the various types of memory dimensions. Gain insights into the workings of ROM, includi

0 views • 45 slides

Understanding Shared Memory, Distributed Memory, and Hybrid Distributed-Shared Memory

Shared memory systems allow multiple processors to access the same memory resources, with changes made by one processor visible to all others. This concept is categorized into Uniform Memory Access (UMA) and Non-Uniform Memory Access (NUMA) architectures. UMA provides equal access times to memory, w

0 views • 22 slides

Understanding Virtual Memory Concepts and Benefits

Virtual Memory, instructed by Shmuel Wimer, separates logical memory from physical memory, enabling efficient utilization of memory resources. By using virtual memory, programs can run partially in memory, reducing constraints imposed by physical memory limitations. This also enhances CPU utilizatio

0 views • 41 slides

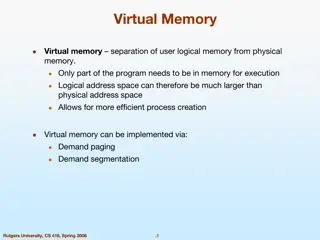

Understanding Virtual Memory and its Implementation

Virtual memory allows for the separation of user logical memory from physical memory, enabling efficient process creation and effective memory management. It helps overcome memory shortage issues by utilizing demand paging and segmentation techniques. Virtual memory mapping ensures only required par

0 views • 20 slides

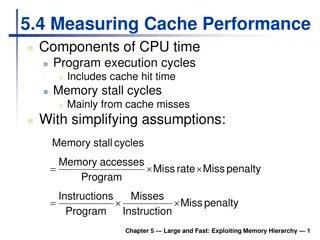

Understanding Cache Performance Components and Memory Hierarchy

Exploring cache performance components, such as hit time and memory stall cycles, is crucial for evaluating system performance. By analyzing factors like miss rates and penalties, one can optimize CPU efficiency and reduce memory stalls. Associative caches offer flexible options for organizing data

0 views • 22 slides

Understanding Memory: Challenges and Improvement

Delve into the intricacies of memory with discussions on earliest and favorite memories, a memory challenge, how memory works, stages of memory, and tips to enhance memory recall. Explore the significance of memory and practical exercises for memory improvement.

0 views • 16 slides

Trace-Driven Cache Simulation in Advanced Computer Architecture

Trace-driven simulation is a key method for assessing memory hierarchy performance, particularly focusing on hits and misses. Dinero IV is a cache simulator used for memory reference traces without timing simulation capabilities. The tool aids in evaluating cache hit and miss results but does not ha

0 views • 13 slides

Understanding Cache Coherence in Computer Architecture

Exploring the concept of cache coherence in computer architecture, this content delves into the challenges and solutions associated with maintaining consistency among multiple caches in modern systems. It discusses the importance of coherence in shared memory systems and the use of cache-coherent me

0 views • 24 slides

Memory Management Principles in Operating Systems

Memory management in operating systems involves the allocation of memory resources among competing processes to optimize performance with minimal overhead. Techniques such as partitioning, paging, and segmentation are utilized, along with page table management and virtual memory tricks. The concept

0 views • 29 slides

Intelligent DRAM Cache Strategies for Bandwidth Optimization

Efficiently managing DRAM caches is crucial due to increasing memory demands and bandwidth limitations. Strategies like using DRAM as a cache, architectural considerations for large DRAM caches, and understanding replacement policies are explored in this study to enhance memory bandwidth and capacit

0 views • 23 slides

Understanding Memory Hierarchy in Parallel Computer Architecture

This content delves into the intricacies of memory hierarchy, caches, and the management of virtual versus physical memory in parallel computer architecture. It discusses topics such as cache compression, the programmer's view of memory, virtual versus physical memory, and the ideal pipeline for ins

1 views • 86 slides

Cache Replacement Policies and Enhancements in Fall 2023 Lecture 8 by Brandon Lucia

The Fall 2023 Lecture 8 by Brandon Lucia delves into cache replacement policies and enhancements for efficient memory management. The session covers the intricacies of replacement policies such as Round Robin, discussing evictions and block prioritization within cache sets. Visual aids and examples

0 views • 60 slides

Enhancing Memory Bandwidth with Transparent Memory Compression

This research focuses on enabling transparent memory compression for commodity memory systems to address the growing demand for memory bandwidth. By implementing hardware compression without relying on operating system support, the goal is to optimize memory capacity and bandwidth efficiently. The a

0 views • 34 slides

Locality-Aware Caching Policies for Hybrid Memories

Different memory technologies present unique strengths, and a hybrid memory system combining DRAM and PCM aims to leverage the best of both worlds. This research explores the challenge of data placement between these diverse memory devices, highlighting the use of row buffer locality as a key criter

0 views • 34 slides

Maximizing Cache Hit Rate with LHD: An Overview

This presentation discusses the concept of Least Hit Density (LHD) for improving cache hit rates, focusing on the challenges and benefits of key-value caches in maximizing performance through efficient eviction policies like LRU. It emphasizes the importance of cache hit rates in enhancing web appli

0 views • 40 slides

Cooperative Cache Scrubbing for Efficient Memory Management in Multicore Systems

Cooperative Cache Scrubbing optimizes memory management in multicore systems by efficiently handling short-lived application objects and reducing unnecessary data writes to memory. By communicating semantic information to hardware caches, dead lines are scrubbed, dirty bits unset, and unnecessary fe

0 views • 40 slides

Cache Replacement Policies in Distributed Systems: Key Considerations and Challenges

Explore the critical aspects of cache replacement policies in distributed systems, including cache consistency, update propagation, eviction strategies, and working sets. Dive into the implications of different policies like LRU and discover why certain access patterns may not be efficiently handled

0 views • 22 slides