Integrating DPDK/SPDK with Storage Applications for Improved Performance

This presentation discusses the challenges faced by legacy storage applications and proposes solutions through the integration of DPDK/SPDK libraries. Topics covered include the current state of legacy applications, issues such as heavy system calls and memory allocations, possible solutions like lockless data structures and reactor pattern, and techniques for integrating DPDK libraries with application libraries. The goal is to enhance scalability, reduce context switches, and improve performance in storage applications.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author. Download presentation by click this link. If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

E N D

Presentation Transcript

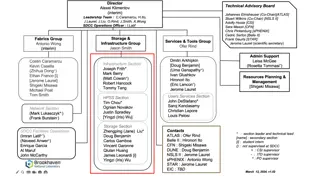

Integrating DPDK/SPDK with storage application @ Vishnu Itta @ Mayank Patel Containerized Storage for Containers

Agenda Problem Statement Current state of legacy applications and its issues Possible solutions Integrating DPDK related libraries with legacy applications Approach of client/server model to use SPDK Future work

State of legacy storage applications Highly depends on locks for synchronization Heavy system calls, context switches Dependency on kernel for scheduling Frequent memory allocations Dependency on locks and heavy page faults due to huge memory requirement Copy of data multiple times between userspace and device Leads to Doesn t scale with current hardware trends Context switches IO latencies

Possible solutions lockless data structures Reactor pattern Usage of HUGE pages Integrating with userspace NVM driver from SPDK Leads to Scalability with CPUs Lesser context switches Better performance

Integrating DPDK related libraries with storage applications

Linking DPDK with application Shared library CONFIG_RTE_BUILD_SHARED_LIB=y Static library CONFIG_RTE_BUILD_SHARED_LIB=n Using rte.ext or rte. Makefiles from dpdk/mk For building application : rte.extapp.mk For shared libraries : rte.extlib.mk, rte.extshared.mk Fo building object files : rte.extobj.mk

Integration of DPDK libraries with Application libraries Usage of constructor in libraries Force linking of unused libraries with shared library LDFLAGS += --no-as-needed -lrte_mempool --as-needed rte_eal_init () - to initialize EAL - from legacy application

Memory allocation with rte_eal library Memory allocation from HUGE pages No overhead of address transformation at kernel Issues with this approach in legacy multi-threaded applications: Spinlock with multiple threads Dedicated core to threads

Cache base allocation with mempool library Allocator of fixed-size object Ring base handler for managing object block Each get/put request does CAS operations

Ring library to synchronize threads operations One thread to process operations Message passing between core thread to/from other threads through RING buffer Single CAS operation Issue Dedicated CPU core to thread But, is there a way to integrate SPDK with current state of legacy applications without much redesign?

vhost-user + virtio Fast movement of data between processes (zero copy) Based on shared memory with lockless ring buffers Processes exchange data without involving kernel Used ring buf Vhost-user server SPDK Legacy App Table with data pointers Vhost-user client Avail ring buf

vhost-user client impl Minimalistic lib has been implemented for prototyping: https://github.com/openebs/vhost-user It is needed for embedding to legacy application replacing read/write calls Provides simple API for read and write ops, sync and async variants Storage device to open is UNIX domain socket with listening SPDK vhost server instead of traditional block device

Results Our baseline is SPDK with NVMe userspace driver Vhost-user library can achieve around 90% of native SPDK s performance if tuned properly Said differently: overhead of virtio library can be as low as 10% For comparison, using SPDK with libaio which simulates what could have been achieved with traditional IO interface, achieves 80%

Future work While the results may not be appealing enough, it is not the end SPDK scales nicely with number of CPUs. If vhost-user can scale too, that could make the gap between traditional IO interfaces and vhost-user bigger Requires more work on vhost-user library (adding support for multiple vrings in order to be able to issue IOs to SPDK in parallel) The concern is still there if legacy apps could fully utilize this potential without major rewrite remains